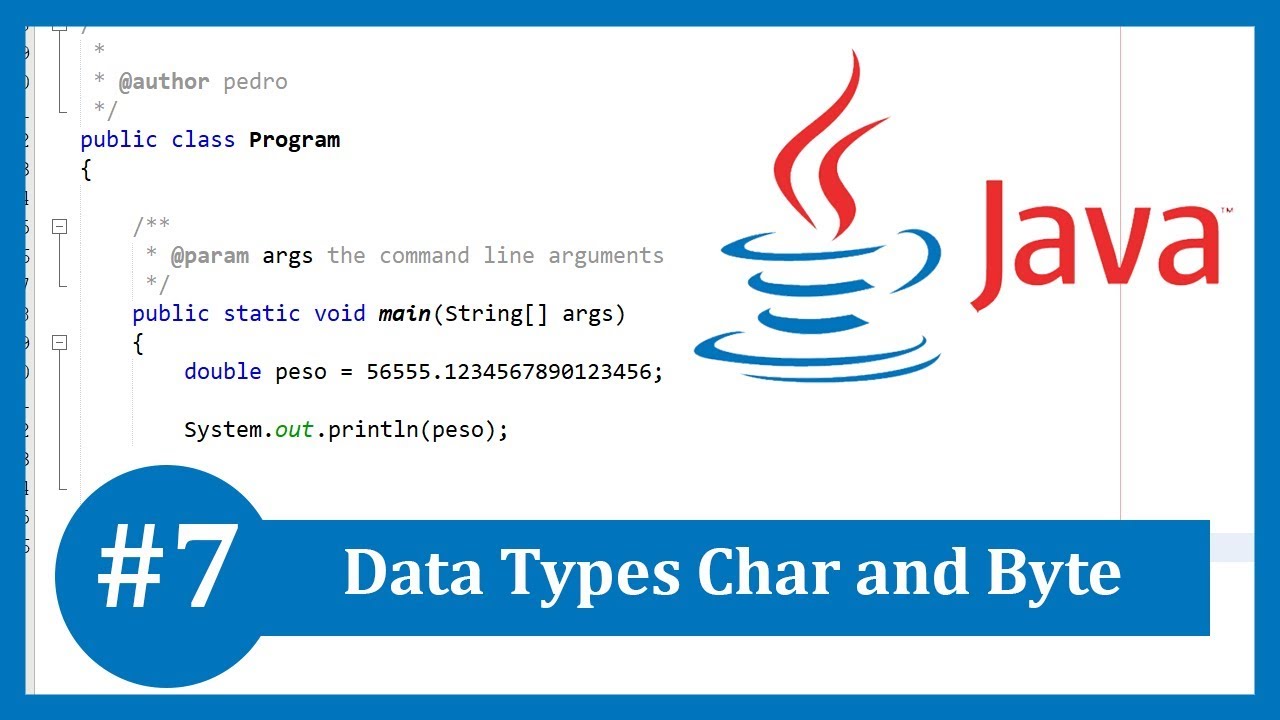

It is the single most common myth about Unicode, so if you thought that, don’t feel bad. Some people are under the misconception that Unicode is simply a 16-bit code where each character takes 16 bits and therefore there are 65,536 possible characters. “16-bit Unicode character”? I guess Joel was right: It has a minimum value of ‘\u0000’ (or 0) and a maximum value of ‘\uffff’ (or 65,535 inclusive). Let’s look at its definition from the official source:Ĭhar: The char data type is a single 16-bit Unicode character. All possible Unicode characters in existence plus a lot more (1 million more) could be represented using UTF-16 and thus as Strings in Java.īut char is a different story altogether. For all other characters, it uses 4 bytes. For characters that can fit into the 16 bits space, it uses 2 bytes to represent them. UTF-16 is a variable length encoding scheme. Java has supported Unicode since its first release and strings are internally represented using UTF-16 encoding. Unicode has outgrown the 16-bit space and now requires 21 bits for all of its 120,737 characters. Windows 95 was the latest, greatest operating system, world’s first flip phone was just put on sale, and Unicode had less than 40,000 characters, all of which fit perfectly into the 16-bit space that char provides. When Java first came out, the world was a simpler place.

‘a’, ‘b’, ‘c’) and has been supported in Java since it was released about 20 years ago. char is used for representing characters (e.g. If I may be so brash, it is my opinion that the char type in Java is dangerous and should be avoided if you are going to use Unicode characters.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed